Build AI agents on Anyscale

Build AI agents on Anyscale

This page provides an overview of building AI agents on Anyscale, including the recommended decoupled architecture and how to choose between single-agent and multi-agent patterns.

What is an AI agent?

An AI agent is an application that uses an LLM as a reasoning engine to plan and execute multi-step tasks by calling tools and interacting with external systems. Unlike a standalone chatbot, an agent decides which tool to call, when to call it, and how to combine results into a final answer.

A typical agent loop does the following:

- Receives a user request.

- Calls an LLM to decide whether to use a tool, ask a follow-up, or respond directly.

- Executes any tool calls such as a database query, web search, or API request, then feeds results back to the LLM.

- Repeats until the LLM produces a final answer.

Agents extend this pattern in two important directions. Single agents call tools that you expose through the Model Context Protocol (MCP). Multi-agent systems split work across multiple specialized agents, where each agent owns its own tools, prompts, and scaling profile. The reference multi-agent architecture on Anyscale uses the Agent-to-Agent (A2A) protocol for coordination (see A2A protocol documentation), but A2A is one option among many.

Quickstart

You can run templates for both patterns directly from the Anyscale console:

How does Anyscale support agents?

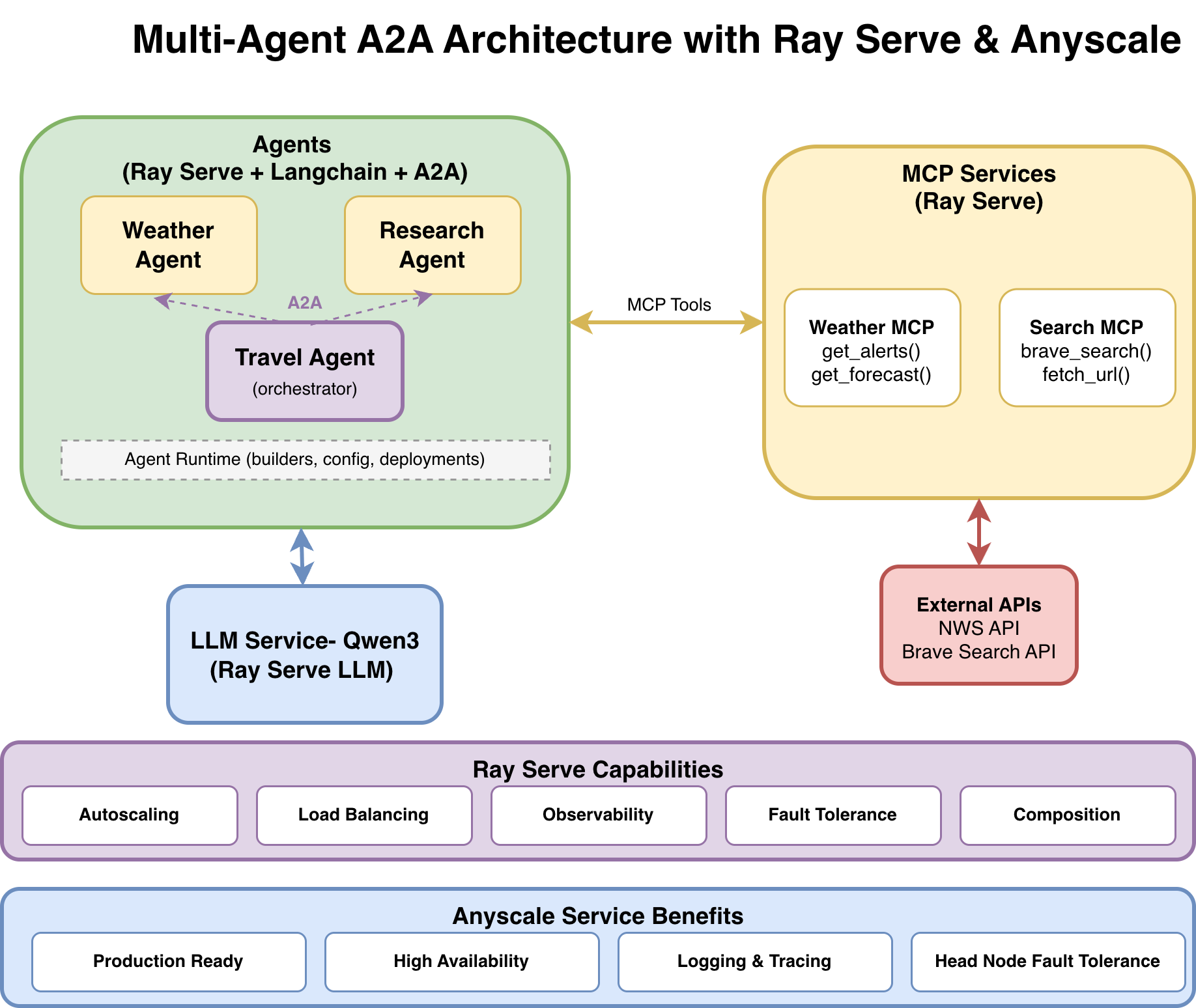

Anyscale runs each component of an agent as an independent Ray Serve application backed by an Anyscale service. The recommended decoupled microservices architecture splits responsibilities across the following layers:

- An LLM service runs the model with Ray Serve LLM and vLLM behind an OpenAI-compatible endpoint. The LLM service owns GPU resources and supports vLLM features such as continuous batching, PagedAttention, tool calling, and structured output. See Serve LLMs with Anyscale services, Configure tool and function calling for LLMs, and Configure structured output for LLMs.

- One or more MCP tool services wrap external systems such as APIs, databases, or search engines. Each service exposes its tools through the streamable HTTP transport so any MCP-compatible agent can discover and call them. For scalable deployments, run the MCP server in stateless mode and deploy it with Ray Serve. See Basics of MCP, Deploy scalable MCP servers with Ray Serve, and Ray Serve on the Anyscale Runtime.

- An agent service runs the orchestration logic. Frameworks such as LangChain and LangGraph compose the LLM and MCP tools into a reasoning loop, manage conversation state, and stream results to clients over server-sent events, or SSE. Use Anyscale services for availability-zone-aware scheduling, bearer-token authentication, centralized logging and tracing, and optional head node fault tolerance for supported cloud deployments. See What are Anyscale services?, Configure head node fault tolerance, and Tracing guide.

Together, these components keep model inference, tool execution, and orchestration independently deployable.

Single-agent versus multi-agent patterns

The right architecture depends on how much your workload benefits from specialization. The following table compares the two patterns:

| Pattern | When to use | Example |

|---|---|---|

| Single tool-using agent | Your agent solves a focused problem and the tools it needs share a single domain. One LLM, one set of tools, one prompt. | A weather assistant that calls weather APIs, or a support agent that queries a single ticket database. |

| Multi-agent system | Your workload spans multiple domains, each with its own tools, prompt strategy, and quality bar. You want to compose specialized agents instead of overloading one monolithic agent. | A travel planner that delegates research to a web-search agent and forecasts to a weather agent, then synthesizes a final itinerary. |

Start with a single agent. Move to a multi-agent system when prompts grow unwieldy, when one agent's tools start crowding out another's, or when you need to scale or version pieces of the workflow independently. The following diagram shows the reference multi-agent pattern.

Why decouple agent services?

Running agents on Anyscale means each layer scales and fails independently. The LLM service is GPU-bound and expensive, so it scales with inference demand. The lightweight agent and tool services are CPU-bound, so they scale with request volume. If a tool service fails, the agent can handle the partial failure without taking down the whole system.

Anyscale services also harden the deployment for real traffic. They support zero-downtime rolling updates, distributed tracing across agent, tool, and LLM calls, and per-replica logs that make agent reasoning loops debuggable. See Update an Anyscale service and Monitor a service.

Related documentation

The following pages cover the components that agents on Anyscale build on:

- For LLM serving with Ray Serve and vLLM, see Serve LLMs with Anyscale services.

- For tool and function calling configuration, see Configure tool and function calling for LLMs.

- For an introduction to MCP and its role in agent tool integration, see Basics of MCP.

- For deploying MCP servers as Anyscale services, see Deploy scalable MCP servers with Ray Serve.

- For the production capabilities of Anyscale services, see What are Anyscale services?.